Inside Logic Pro’s pioneering AI toolset: A producer’s perspective

Apple’s latest Logic Pro update has set the music production world into a frenzy with its integration of advanced AI capabilities. As a University at the forefront of exploring AI’s creative impact through our AI_Labs programme, BIMM University sought insights from an industry professional.

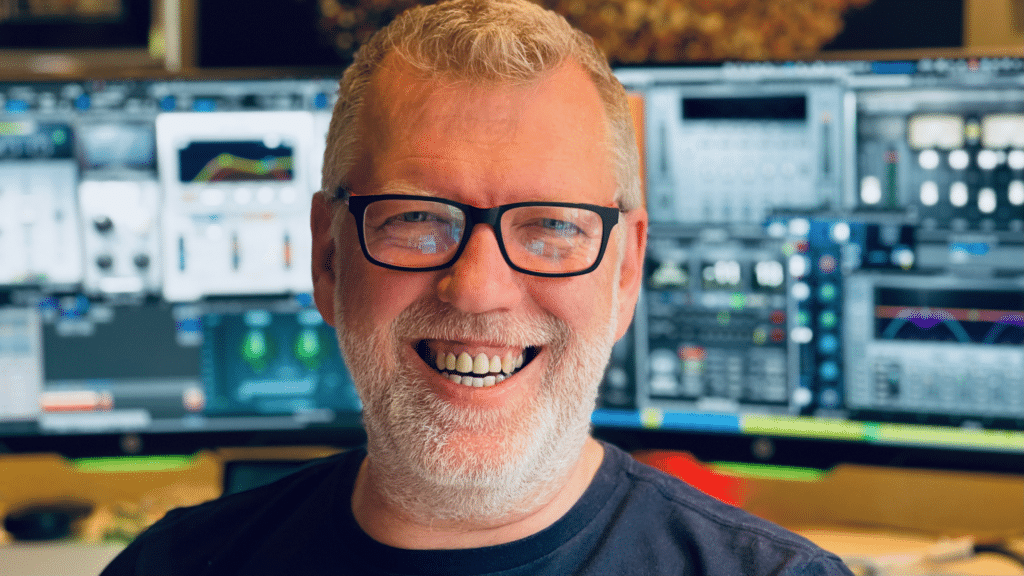

We consulted Grammy-winning sound engineer and Logic Pro expert, Alan Branch for his views on the latest innovations in the software. His insights revealed both the innovative potential and current limitations that will help shape how we guide our students.

At BIMM, we want students to have a balanced, forward-thinking mindset about using AI creatively. While AI tools such as Session Players are powerful aids, using them to their full potential still needs a strong base of musical knowledge. Combining timeless fundamentals with openness to new technologies will allow the next generation of musicians to thrive.

Here’s the full interview with Alan:

How intuitive do you find the new AI tools integrated into Logic Pro, and is there a steep learning curve for music producers?

Like any new feature, working with Session Players requires a bit of a learning curve. These new Session Players operate similarly to the previous Logic Drummer, but having some musical knowledge helps. The new Session Players rely on a chord track or chords within the regions. Unfortunately, there’s no automatic audio chord recognition yet—you’ll need to manually edit the chord track or input chords via MIDI. On the bright side, the interface is straightforward. Both the keyboard and bass players offer numerous options for adjusting playing style, timing, and feel. They can even sync up with the rhythm of other tracks in your project and add fills. There are even separate hand controls for the piano.

How do you see AI tools like Session Players enhancing or changing the creative process, particularly in songwriting and composition?

It’s worth remembering we already have apps capable of generating standard music parts. Personally, I haven’t found them particularly impressive, especially for professional studios. However, Logic’s AI Session Players, now integrated into Logic Pro, offers new possibilities. With various manipulation options, these generative music instruments might accelerate the songwriting process or provide creative direction. Still, there’s a question: If AI learns from a limited set of performances, how long until some compositions start sounding familiar? Ultimately, the effectiveness depends on how these instruments fit into the overall song production. Notably, the Session Players’ real-time chord progression changes—accessible via a dropdown menu—may be a great help when sketching out melody ideas over a piano part.

Have these tools made any noticeable improvements in terms of speed and productivity in your workflow?

It’s a bit early to say. For me, features like Logic Session Players won’t make much difference. However, there are other tools like the Waves Clarity Vx Pro plugin that utilises AI to achieve remarkable results. By using Neural Networks, it can clean up a vocal in seconds. These kinds of tools go beyond what I can do manually—not only a huge time saver in the studio but also an increase in audio quality.

Are there any cool and unique features in the AI tools that assist with innovative sound design and the creation of new textures?

There are some amazing sound design and creative AI tools out there now, transforming a human voice into the sound of a lion or a machine in real-time. App developers like Krotos Studio have been pioneers in this field for years, making them invaluable for cinematic work. For music producers, there are more and more new AI tools. I noted OpenAI has a voice clone that can transform your singing voice into a cloned voice of another singer. However, like a lot of these tools, the practicality of this for professional production I’m not sure. Here are a few notable ones I’ve come across:

Waves Clarity VX – remarkable for cleaning up live vocals and dialogue.

Atlas 2 by Algonaut, uses AI algorithms to build a unique sample library along with a drum sequencer.

Synplant 2 has a machine learning algorithm to clone a source sound into the synth that opens a Pandora’s box of sound possibilities.

Do you find the AI tools are more suitable for certain music genres or styles, and if so, which ones?

It’s possible that genres heavily reliant on exceptional recordings and performances pose challenges for AI replication, especially when aiming for high-quality results. Although Udio.com, a site that creates incredible AI musical content, seems to be limitless with its genre creations. Though much like the use of samples in professional recordings, I have to question how practical these are for a commercial release.

How well do these tools support collaborative work between music producers and musicians?

As a producer, there is a collaborative fusion that goes on between what the producer wants to hear and what a session musician plays. This two-way musical conversation is common when tracking parts for a song. An AI musical sketch might be a good bridge that a producer can use to get ideas across.

For example, before recording live session players, these AI-driven musicians allow you to sketch out musical ideas. Songwriters use whatever is to hand to get a song together, including important decisions like a song’s tempo, key, rhythm, and most importantly melody. By getting these elements right up front, you can avoid wasting time and resources during extensive band recording sessions. There is also the case of AI tools making it easier for a producer and an artist to do much more on their own. If a simple piano part is all that’s needed, then Logic’s Session Player could save a lot of time.

Do the AI tools like Stem Splitter and ChromaGlow offer significant support during the mixing and mastering stages of production?

ChromaGlow, a saturation plugin, provides an array of saturation models—from modern and retro tubes to magnetic tape and FET-based harmonic distortion. It also includes classic compression options that enhance smoothness while adding tons of character. These types of saturation plugins are fabulous for enriching tone during mixing. I focus less on the AI aspect and more on the quality of sound and its effectiveness within my current project.

Stem Splitter shows how far AI has come, separating components of a stereo track into separate parts of drums, bass, instruments, and vocals. It is quite magical! However, manage your expectations regarding high quality—based on my testing, there are trade-offs inherent in the technology.

Still, it might be good enough to be useful to learn parts, research musical content, or recover a lost multitrack master for remixing. Many will use it to add to their sample libraries, but it’s worth remembering samples do need to be cleared if you’re going to use them commercially.

Are there enough customisation options in these tools to ensure creative control, or do you find them too prescriptive at times?

A bit early to draw conclusions, however, the Logic Drummer has been there for a while. It’s been revamped and I find the updated percussion player incredibly useful; playing with shakers and tambourines has never been so easy. The Piano and Bass Player are remarkably good for small parts. There are lots of options to manipulate the part so it doesn’t seem prescriptive. There are different player types and a wealth of options, including the main separate intensity and complexity controls. But I’m sure, used enough over a whole song, we may get to recognise repeating phrases. But you could say that about a real musician and the licks they play. As long as it’s got something like the part you want, you can then edit it yourself as it’s all convertible to MIDI.

How valuable are these tools in an educational setting for teaching music production techniques?

For chord structures, keys, and songwriting, I think they may be a helpful educational tool. As Logic Session Players require chords via the new Chord track or in the region, I’m sure there will be a lot of discussion about this. However, you can also choose standard chord progressions. This may help students discover why I-V-vi-IV is the most popular progression. Because it’s easy to change progressions in real-time, it could help decipher why they sound characteristically different.

For production, of course, it could be a wonderful tool to see how alternative parts affect the feel and flow of a song, experimenting with a half-time section, or alternative rhythmic pianos, etc.

How do you see these AI tools evolving, and what could be their impact on the future of music production?

For version one of the new AI tools, it’s a good start in Logic. It’s sure to trigger lots more development. Another app launched in the same week as Logic’s announcement is version two of a mobile app called SongZap. This has a new AI feature that can analyse a simple guitar part, detect the key, chords, and rhythm, and generate new drums, bass, and keys parts. Over the years, I’ve seen a lot of new technology arrive and change the music-making process. It still requires good songs and songwriting. I feel AI is much the same. Some of the instant generative music isn’t going to add significantly to the creative landscape, but tools that help us understand music more, create more, and bring new sounds could offer us genuine innovation as we strive to keep creating something we love.

Apple’s latest Logic Pro update has revolutionised music production with its advanced AI capabilities and we’re grateful to Alan for highlighting both the potential and limitations of AI tools like Session Players, and for emphasising the necessity of a strong musical foundation alongside these technological aids.

While tools such as Waves Clarity VX Pro and Atlas 2 offer remarkable enhancements in speed, productivity, and creative possibilities, the integration of AI into music production still requires a balance of traditional skills and modern technology. This evolving landscape promises exciting opportunities for the next generation of music producers and artists.